Pentagon Inks OpenAI Deal After Trump’s Anthropic Ban - Defense AI Battle Heats Up

The Pentagon just cut a major deal with OpenAI—right on the heels of the former Trump administration's ban on rival Anthropic. It's a power move that reshapes the entire defense AI chessboard.

Why This Deal Changes Everything

Forget slow procurement cycles. This agreement signals a new era where the military bypasses traditional contractors to tap directly into bleeding-edge AI labs. The goal? To secure a decisive tech edge. The subtext? An intense, behind-the-scenes rivalry for government contracts that's more competitive than a crypto trading floor during a bull run.

The New Rules of Engagement

The Trump-era ban on Anthropic didn't just remove a player—it cleared the path. Now, OpenAI has the Pentagon's ear (and budget). Expect rapid prototyping, custom model development, and a focus on autonomous systems, cybersecurity, and strategic simulation. It's a classic case of regulatory action creating a de facto monopoly—something Wall Street would applaud, if only the returns were as transparent as a blockchain ledger.

A Provocative, Yet Inevitable, Alliance

This partnership blurs lines between Silicon Valley innovation and Pentagon pragmatism. Critics will howl about ethics and 'killer robots.' Proponents will cite national security necessity. The cynical finance take? It's the ultimate venture capital play: secure a single, deep-pocketed, sovereign client, and your valuation is bulletproof—regardless of market sentiment. The race for AI supremacy isn't just about algorithms; it's about locking down the single biggest customer on Earth. Checkmate.

Under this OpenAI Pentagon deal, the company will send its own engineers to the Pentagon. Their job is to make sure the artificial intelligence tools work safely within the military’s high-security systems. Open AI is now the top provider of this technology for the U.S. Defense Department. This fills the gap left behind after Anthropic was forced to leave. While the transition seems smooth, many people are still asking if using artificial intelligence for military secrets is the right MOVE for the future.

Decoding the Ethical Guardrails in the New OpenAI Pentagon Deal

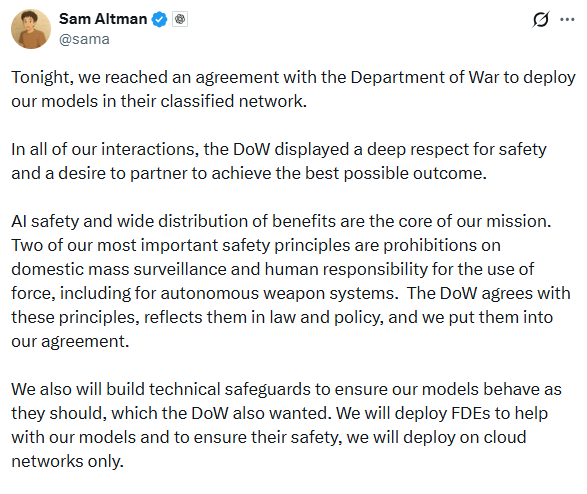

A very interesting part of the OpenAI Pentagon deal is the set of safety rules included in the contract. Sam Altman shared on social media that Open AI has "red lines" that the military must follow. For example, the artificial intelligence cannot be used for spying on Americans at home. Also, the military cannot use the AI to control "killer robots" that make life-or-death decisions without a human in charge.

The Department of War (DoW) has agreed to these safety rules. This has caused some confusion among experts. Earlier in the day, the government called Anthropic a "supply chain risk" for asking for these exact same rules. Defense Secretary Pete Hegseth said Open AI acted in "good faith," while he accused Anthropic of being "woke" and difficult to work with. It seems the government prefers partners who are willing to cooperate closely with the current administration.

Amazon’s $50B Investment and Global Market Shift

While the defense deal solidified OpenAI’s political standing, a massive $50 billion investment from Amazon has restructured the company’s financial future. This commitment is part of a record-breaking $110 billion funding round that values Open AI at a staggering $840 billion. For the market, this is a global capital signal that artificial intelligence adoption is nearing a commercial turning point. The deal includes immediate funding and a long-term partnership that positions Amazon Web Services (AWS) as a central platform for OpenAI’s enterprise tools.

Technical Implementation and Industry Support

To keep things safe, OpenAI is building special technical blocks. The artificial intelligence will stay in secure cloud networks and will not be put directly into weapons like drones or missiles. This setup ensures that a human is always part of the decision-making process. Open AI engineers will stay on-site to watch how the technology is used and to fix any risks that might pop up.

This drama has brought many tech workers together. Hundreds of employees from Google and Open AI signed a letter supporting Anthropic. They believe that companies should stand together against government pressure to build dangerous weapons. Even with this pushback, the OpenAI Pentagon deal is moving forward. It shows that the Trump administration wants to work with companies that follow its "America First" plan for technology.

Expert Analysis: Future Outlook

The current situation suggests a new kind of "AI Cold War." It is not just about the U.S. competing with other countries like China. It is also an internal struggle over who controls the ethics of AI. By banning one company and hiring another so quickly, the WHITE House has set a powerful new precedent.

In the coming months, we will likely see a big legal battle. Anthropic has already said it plans to sue the government over the ban. Meanwhile, the success of the OpenAI Pentagon deal will depend on whether Sam Altman can keep his safety promises. If this deal works out, it will likely become the model for every other military technology contract in the future.

Government contracts and bans on tech companies can change stock prices and market trends. This report is for information only and is not financial or legal advice.